SambaNova and Intel Announce Blueprint for Heterogeneous Inference: GPUs for Prefill, SambaNova RDUs for Decode, and Intel® Xeon® 6 CPUs for Agentic Tools

SambaNova and Intel Announce Blueprint for Heterogeneous Inference: GPUs for Prefill, SambaNova RDUs for Decode, and Intel® Xeon® 6 CPUs for Agentic Tools

Key Highlights

- Coding agents are exposing the limits of GPU-only infrastructure, making each phase of the pipeline mission-critical: efficient prefill, high-throughput decoding, and high-performance agent task execution across a broad software ecosystem.

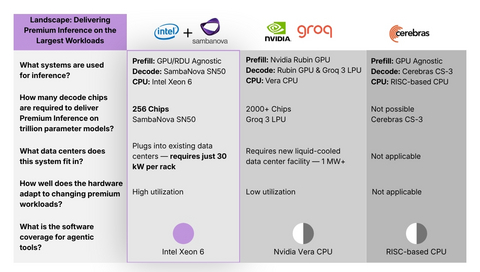

- Intel and SambaNova are delivering a heterogeneous hardware solution combining GPUs for prefill, SambaNova RDUs for decode, and Intel® Xeon® 6 CPUs for agentic tools and system orchestration.

- This production‑scale system will be available to enterprises and cloud platforms in H2 2026 with full support for the AI software stack.

- Under a signed agreement, SambaNova is standardizing on Xeon 6 as the host CPU that is paired with SambaNova RDUs as the inference backbone of this agentic AI solution.

SAN JOSE, Calif.--(BUSINESS WIRE)--SambaNova today announced the next phase of its collaboration with Intel: a heterogeneous hardware solution that combines GPUs for prefill, Intel Xeon® 6 processors as both host and “action” CPUs, and SambaNova RDUs for decode to deliver premium inference for the most demanding Agentic AI applications. The design will be made available in H2 2026 to enterprises, cloud providers, and sovereign AI programs that want to run coding agents and other agentic workloads at scale.

“Agentic AI is moving into production — and the winning pattern we’re seeing is GPUs to start the job, Intel Xeon 6 to run it, and SambaNova RDUs to finish it fast,” said Rodrigo Liang, CEO and co‑founder of SambaNova Systems.

Share

“Agentic AI is moving into production — and the winning pattern we’re seeing is GPUs to start the job, Intel Xeon 6 to run it, and SambaNova RDUs to finish it fast,” said Rodrigo Liang, CEO and co‑founder of SambaNova Systems. “Together with Intel, we’re giving customers a blueprint they can deploy in existing air‑cooled data centers, with broad x86 coverage for the coding agents and tools they already use today.”

“The data center software ecosystem is built on x86, and it runs on Xeon — providing a mature, proven foundation that developers, enterprises, and cloud providers rely on at scale,” said Kevork Kechichian, Executive Vice President and General Manager of the Data Center Group (DCG) at Intel Corporation. “Workloads of the future will require a heterogeneous mix of computing, and this collaboration with SambaNova delivers a cost‑efficient, high‑performance inference architecture designed to meet customer needs at scale — powered by Xeon 6.”

Agentic AI moves mainstream

Agentic AI has moved from demos to deployments, as coding agents now compile and run code, call tools and APIs, tap databases, and coordinate workflows on fast, low‑latency large‑model inference. In the process, they are exposing the limits of GPU‑only stacks: GPUs handle prefill, but CPUs and dedicated inference accelerators now decide how fast and efficient real‑world agent workloads are executed, scaled, and optimized in production.

“We are seeing AI Agents code output grow exponentially and as a result, Daytona is seeing the need for more and more sandboxes to run and compile this code, which runs on CPUs like Intel's Xeon," said Ivan Burazin, CEO of Daytona, a secure coding infrastructure company for agentic AI.

"Production inference is moving toward heterogeneous hardware — no single chip type is optimal for every stage of an agentic workflow. What makes the Intel and SambaNova blueprint stand out is that it pairs reconfigurable RDUs for fast decode with Intel Xeon CPUs for agent tool execution — delivering premium performance with fewer chips and full compatibility with the software ecosystem enterprises already run on," said Banghua Zhu, co-founder and CTO at RadixArk.

Why Intel Xeon 6 and SambaNova RDUs

The jointly engineered architecture is centered on Intel Xeon 6 processors and SambaNova RDUs. The SN50 RDU is designed to change the tokenomics of inference, delivering high‑throughput, low‑latency decode for large language models, while Xeon 6 provides the memory bandwidth, PCIe lane density, and on‑die accelerators.

In SambaNova’s measurements, Xeon 6 delivers more than 50% faster LLVM compilation times compared with Arm‑based server CPUs, and up to 70% faster vector database performance compared with available x86‑based competition. This accelerates end‑to‑end coding agent workflows, allowing developers to move from idea to production‑ready agents noticeably faster.

In this new design:

- GPUs handle the highly parallel prefill phase, turning long prompts into key‑value caches efficiently.

- SambaNova RDUs sit alongside Xeon 6 as the dedicated inference fabric for high‑throughput, low‑latency decode, ensuring that once the CPUs have set up the work, tokens are generated quickly and efficiently.

- Xeon 6 is the host CPU and system control plane, responsible for agentic task coordination, workload distribution, tool and API execution, and system‑level behavior, while also serving as the action CPU that compiles and executes code and validates results.

"SambaNova’s collaboration with Intel reflects where Agentic AI infrastructure is heading: Xeon 6 handling control and orchestration, with RDUs focused on decode. One chip no longer does everything. This split of architectures is exactly what enterprises seek, not because it is split, but it aims to provide better performance, efficiency, and a system-level balance for AI in production environments,” said Ian Cutress, CEO and Chief Analyst at More Than Moore.

Designed for the Data Centers That Already Exist

For enterprises, government agencies, and sovereign AI programs operating under strict data residency or security requirements, every component of the inference stack must be colocated inside a controlled environment. Unlike the newest GPU-only architectures that require specialized liquid-cooled facilities with custom power infrastructure, the SambaNova and Intel solution deploys in standard, air-cooled data centers that enterprises, regional cloud providers, and national AI programs already operate worldwide. Organizations in financial services, healthcare, defense, and sovereign AI initiatives can run production-scale agentic AI fully in-house — without exporting sensitive data and without building new facilities.

Accelerating the next phase of AI

This announcement marks a clear progression from partnership to large‑scale commercial deployment, signaling confidence in the technology and offering a strong, competitive solution for enterprises, service providers, and global cloud platforms.

About SambaNova

SambaNova is a leader in next‑generation AI infrastructure, providing a full stack platform that powers efficient AI inference for enterprises, NeoClouds, AI labs and service providers, and sovereign AI initiatives worldwide. Founded in 2017 and headquartered in San Jose, Calif., SambaNova delivers chips, systems, and cloud services that enable customers to deploy state‑of‑the‑art models with superior performance, lower total cost of ownership, and rapid time to value.

For more information, visit sambanova.ai or follow SambaNova on X and LinkedIn.

© Intel Corporation. Intel, the Intel logo, and other Intel marks are trademarks of Intel Corporation or its subsidiaries. Other names and brands may be claimed as the property of others.

Contacts

Virginia Jamieson, Head of Communications, SambaNova

virginia.jamieson@sambanova.ai