New ISACA Research: 56% of Digital Trust Pros Don’t Know How Fast They Could Shut Down AI After a Security Incident

New ISACA Research: 56% of Digital Trust Pros Don’t Know How Fast They Could Shut Down AI After a Security Incident

Select advance results from ISACA’s 2026 AI Pulse Poll, which gathered responses from more than 3,400 digital trust professionals, find that amidst increasing AI utilization at enterprises, there appears to be limited human oversight over AI decision-making, little disclosure around AI use, and uncertainty around AI security incident response and accountability for AI system harm.

SCHAUMBURG, Ill.--(BUSINESS WIRE)--AI technology is being adopted rapidly within many workplaces, but organizations are not necessarily keeping up with the governance and security measures needed to protect themselves from its risks, according to an advance look at select findings from ISACA’s 2026 AI Pulse Poll, which examines the latest trends related to AI use, policies and standards, workforce impact, incident response security, and more.

New ISACA research: 56% of digital trust pros don’t know how fast they could shut down AI after a security incident.

Share

The global pulse poll, which gathered responses from more than 3,400 digital trust professionals, finds that amidst increasing AI utilization at enterprises, there appears to be limited human oversight over AI decision-making, little disclosure around AI use, and uncertainty around AI security incident response and accountability for AI system harm.

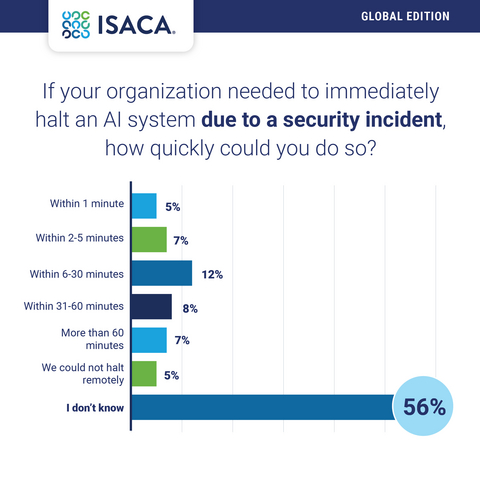

More than half of respondents (56 percent) indicate they do not know how quickly they could immediately halt an AI system due to a security incident if needed. Thirty-two percent believe they could halt it within 60 minutes, and 7 percent say it would take them more than 60 minutes.

Additionally, less than half of respondents (43 percent) have high confidence in their organization’s ability to investigate and explain to leadership or regulators if a serious incident with an AI system occurred, while 27 percent express low to no confidence.

“While organizations may feel the push to adopt AI technology quickly to keep pace and leverage its capabilities, it is imperative they have the proper guardrails and governance in place before doing so,” said Jenai Marinkovic, vCISO/CTO, Tiro Security, co-founder and board chair of GRCIE, and ISACA Emerging Trends Working Group member. “Enterprises need to ensure the right people, policies, processes, and plans are in place to be able to not only use AI effectively and responsibility, but also to avoid potential major disruption if crisis hits.”

And when it comes to who is ultimately responsible if an AI system causes harm or serious error in their organization, respondents largely point to their board/executives (28 percent). Eighteen percent believe that their CIO/CTO would be responsible, 13 percent assign the responsibility to their CISO, and 20 percent admit that they do not know where the responsibility would lie.

Much of the AI-generated actions taking place at organizations appear to happen without human oversight, with only 36 percent of respondents saying humans approve most AI-generated actions before execution, and 26 percent noting that humans review selected decisions or patterns after execution. Eleven percent say humans intervene only when alerted to potential issues, and 20 percent admit they do not know how humans oversee AI decision-making at their organization.

Also, only 18 percent of poll respondents indicate that disclosure is required and enforced if AI has been used to create or substantially assist with work products, while 20 percent say that disclosure is required but not consistently enforced. Nearly a third (32 percent) note that no disclosure requirements exist.

To meet the needs of digital trust professionals seeking the training, knowledge and best practices to keep pace in the age of AI, ISACA has recently released a range of AI courses and resources, as well as three new credentials: Advanced in AI Audit (AAIA), Advanced in AI Security Management (AAISM), and the upcoming Advanced in AI Risk (AAIR).

The full 2026 AI Pulse Poll from ISACA will be released in May 2026. Learn more about these findings in this blog post. For more AI resources, visit www.isaca.org/resources/artificial-intelligence, or learn more at ISACA booth S-2167 at RSAC Conference 2026, or during Marinkovic’s RSAC session, “From Promise to Practice: How AI Is Reshaping Cybersecurity,” on Thursday, 26 March at 9:40 am PT.

About ISACA

For more than 55 years, ISACA® (www.isaca.org) has empowered its community of 195,000+ members, more than 230 chapters worldwide, in more than 190 countires with the knowledge, credentials, training and network they need to thrive in fields like information security, governance, assurance, risk management, data privacy and emerging tech. . Through the ISACA Foundation, ISACA also expands IT and education career pathways, fostering opportunities to grow the next generation of technology professionals.

Contacts

communications@isaca.org

Emily Ayala, +1.847.385.7223